Hands-On GPU Programming with Python and CUDA: Explore high-performance parallel computing with CUDA: 9781788993913: Computer Science Books @ Amazon.com

A Complete Introduction to GPU Programming With Practical Examples in CUDA and Python | Cherry Servers

NVIDIA AI on X: "Build GPU-accelerated #AI and #datascience applications with CUDA python. @nvidia Deep Learning Institute is offering hands-on workshops on the Fundamentals of Accelerated Computing. Register today: https://t.co/jqX50AWxzc #NVDLI ...

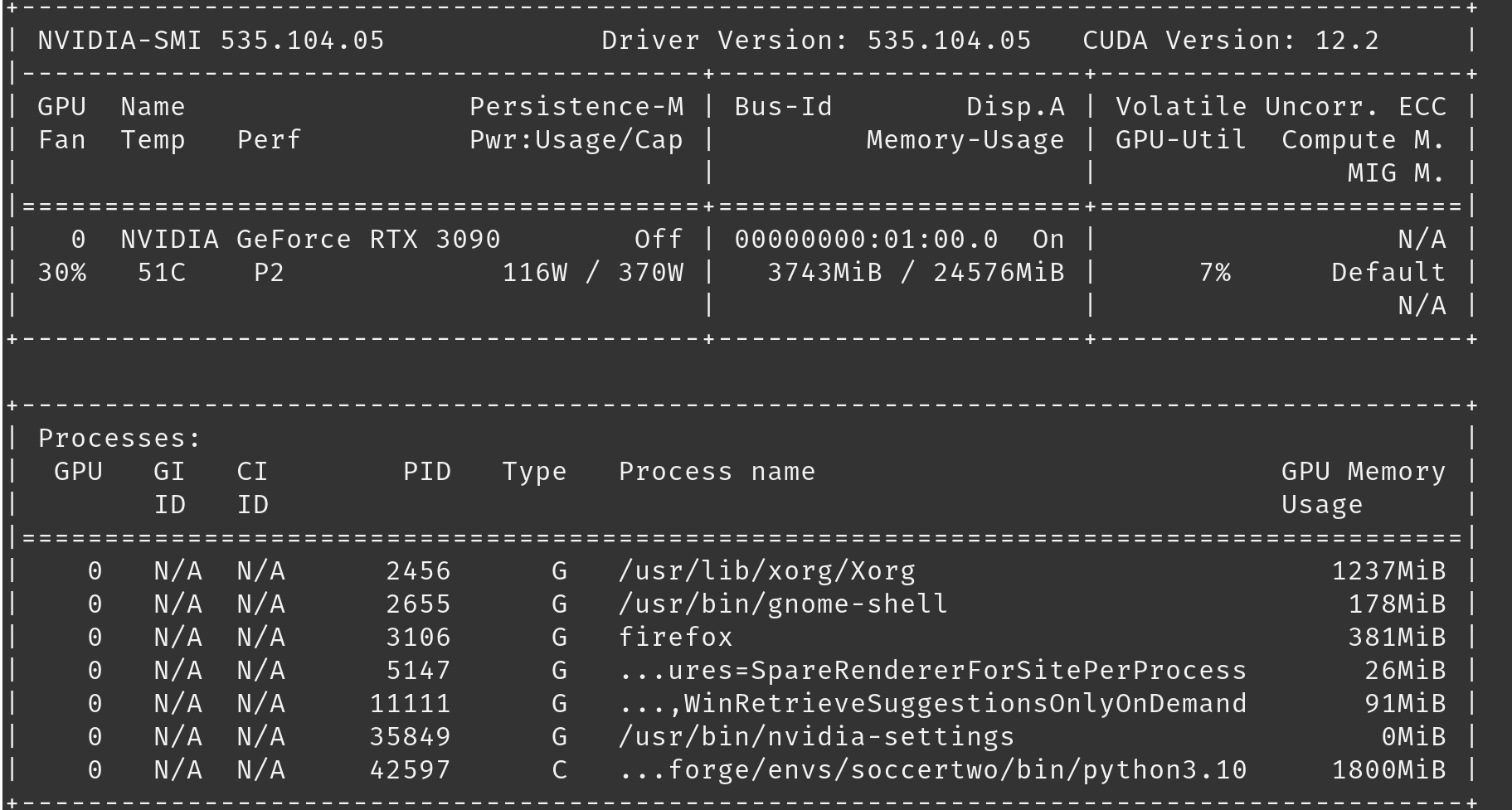

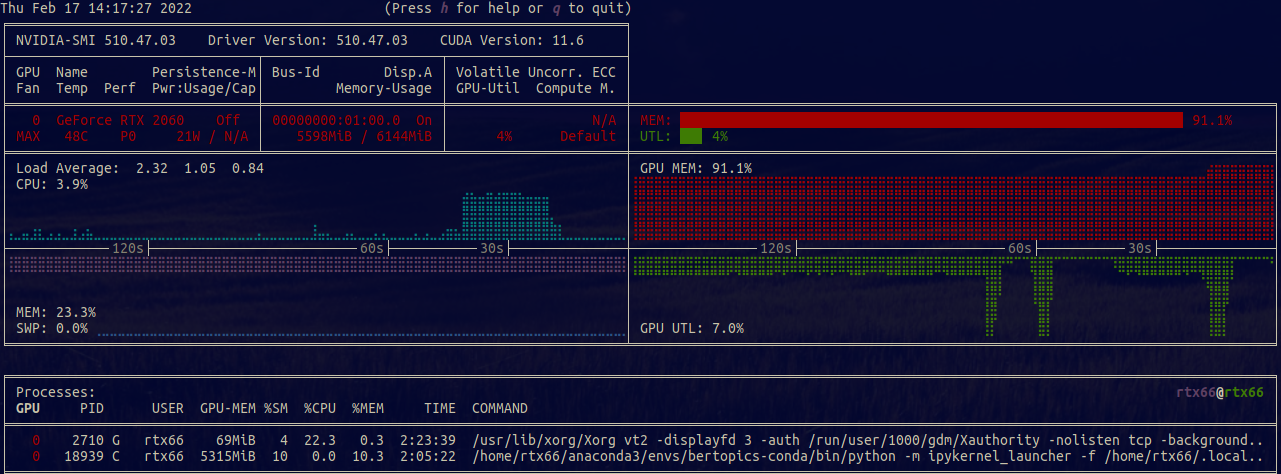

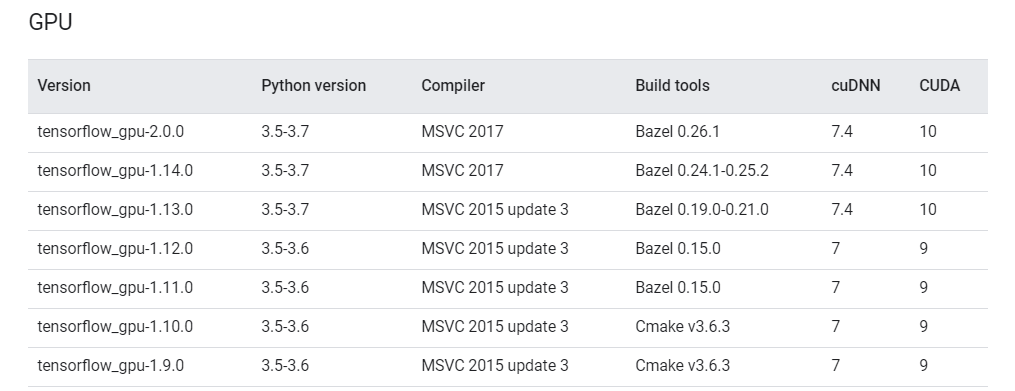

How to Set Up Nvidia GPU-Enabled Deep Learning Development Environment with Python, Keras and TensorFlow

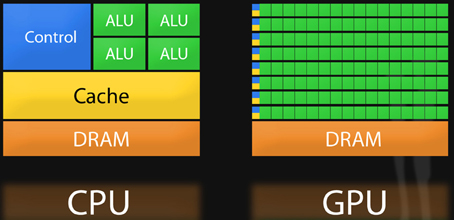

3.1. Comparison of CPU/GPU time required to achieve SS by Python and... | Download Scientific Diagram